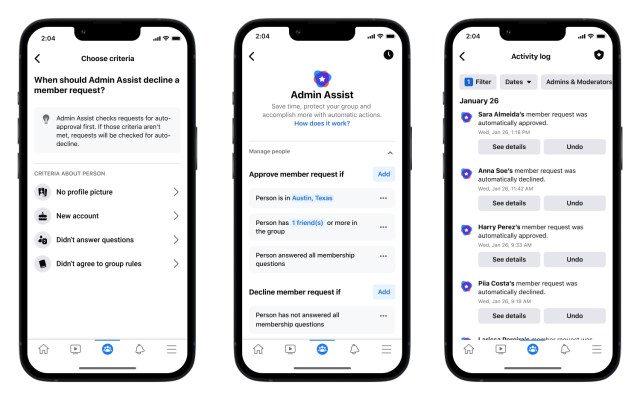

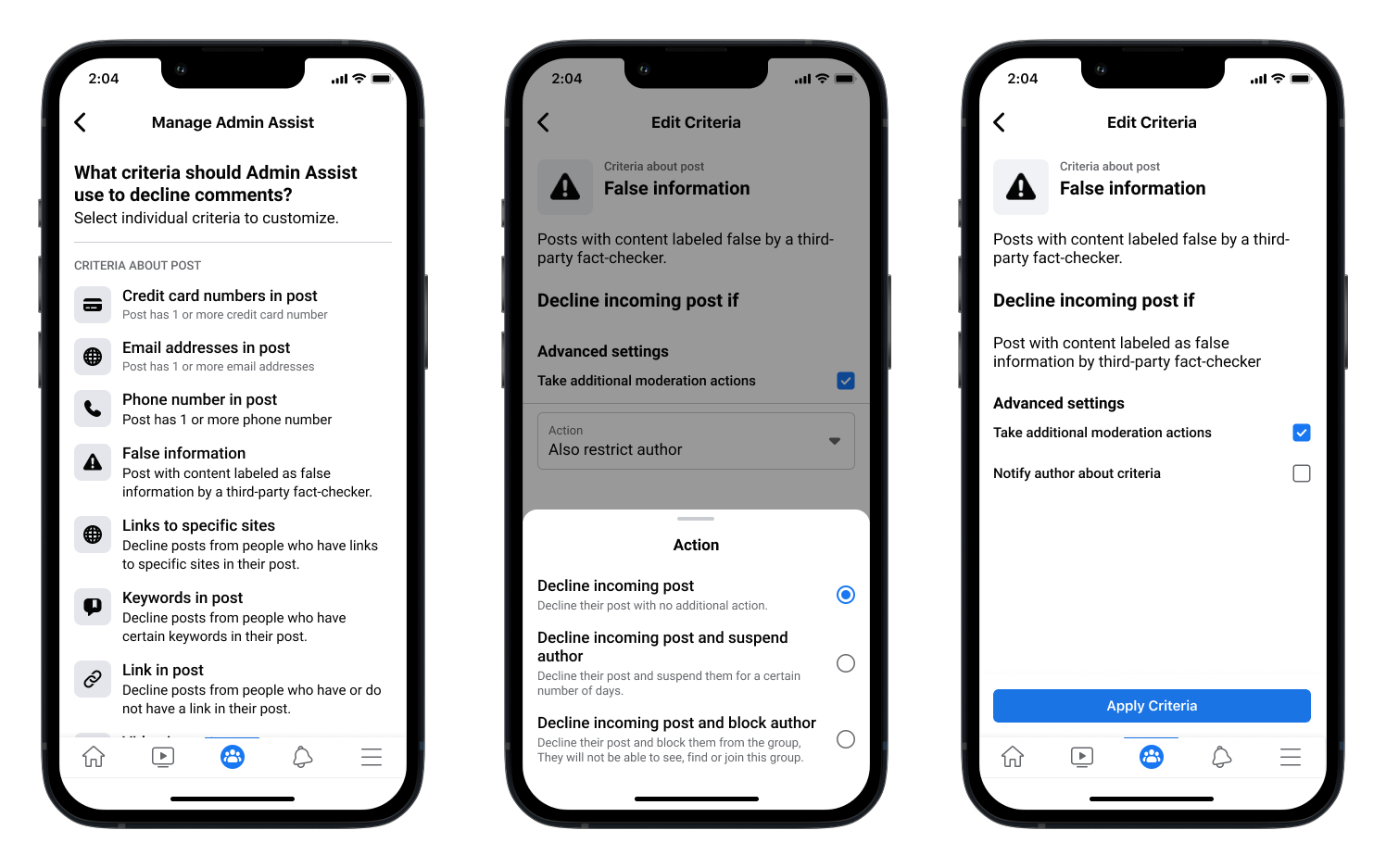

Fb introduced right this moment that it’s rolling out new options to assist Fb Group directors preserve their communities secure, handle interactions and scale back misinformation. Most notably, the corporate has added the choice for admins to robotically decline incoming posts which were recognized as containing false info by third-party checkers. Fb says this new software will assist admins stop the unfold of misinformation of their group.

The corporate can also be increasing its “mute” perform and updating it to “droop,” so admins can briefly droop members from posting, commenting, reacting, collaborating in group chats and extra. The brand new characteristic is designed to make it simpler for admins to handle interactions of their teams and restrict unhealthy actors.

Picture Credit: Meta

As well as, admins can now robotically approve or decline member requests primarily based on particular standards that they arrange, like whether or not they’ve answered the member questions. The group’s “Admin Dwelling” web page is being up to date, too, to incorporate an summary part on the desktop to make it simpler for admins to shortly evaluation issues that want consideration. On cellular, there’s a brand new insights abstract to assist admins perceive the expansion and engagement of their teams.

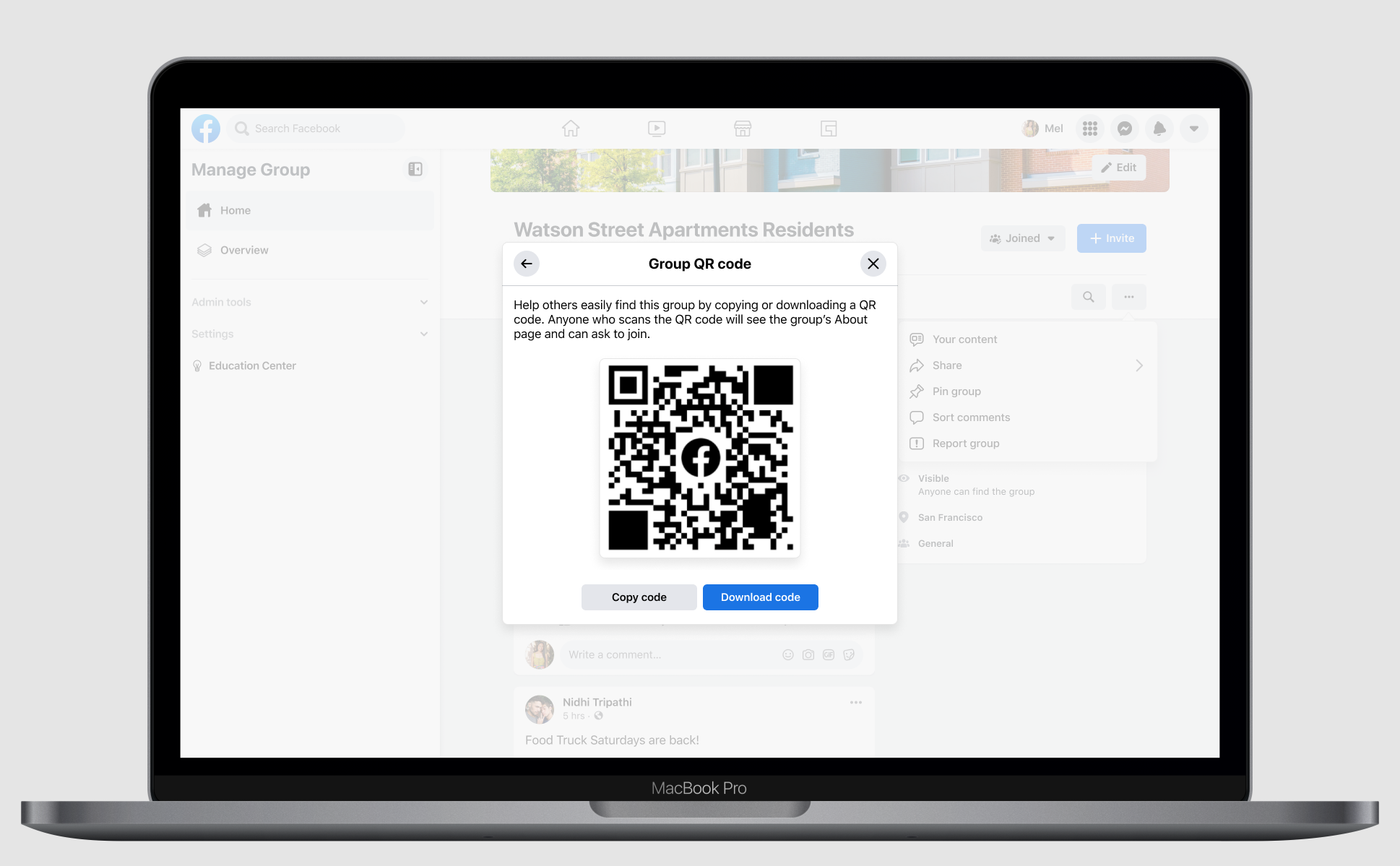

Fb can also be introducing new instruments to assist admins who wish to develop their teams and discover related folks to affix their communities.

The corporate has added the choice for admins to ship invitations by way of electronic mail to ask folks to affix their group. It’s additionally added QR codes that admins can obtain and share as they like, together with offline. When somebody scans the QR code, they’ll be directed to the group’s “About” web page the place they will be a part of or request to affix.

The brand new adjustments have rolled out to all customers globally.

Picture Credit: Meta

Right this moment’s announcement comes as Fb Teams has made headlines over the previous few years for his or her rising use by these seeking to unfold dangerous content material and misinformation. Fb Teams, attributable to their usually non-public nature, have turn into the breeding grounds for a variety of harmful content material, together with well being misinformation, anti-science actions and conspiracy theories. The brand new options introduced right this moment give attention to addressing a few of these points and giving admins extra management over their communities, however they’re arriving years late to the battle towards on-line misinformation.

This isn’t the primary time that Fb has given admins extra management over their teams.

Final June, the corporate launched a brand new set of instruments aimed toward serving to Fb Group directors get a greater deal with on their on-line communities. Among the many extra fascinating instruments was a machine-learning-powered characteristic that alerts admins to probably unhealthy conversations going down of their group. One other characteristic gave admin’s the power to decelerate the tempo of a heated dialog by limiting how usually group members can publish. On the time, Fb had stated there have been “tens of thousands and thousands” of teams which might be managed by over 70 million energetic admins and moderators worldwide.

Together with working to make sure that admins have the instruments they should handle their teams, Fb can also be targeted on enhancing its Teams product total. At its Fb Communities Summit in November, the social networking big introduced a sequence of updates for Fb Teams, together with instruments designed to assist admins higher develop the group’s tradition, in addition to a number of different new additions like subgroups and subscription-based paid subgroups, real-time chat for moderators, help for group fundraisers and extra. The corporate had stated these adjustments have been in anticipation of how Teams will play a task in mum or dad firm Meta’s upcoming plans for the “metaverse” it’s constructing.